Emotion detection within a mobile platform may be used by content

developers to provide a more fulfilling user experience, such as,

updating an interface and/or experience in real-time based on user

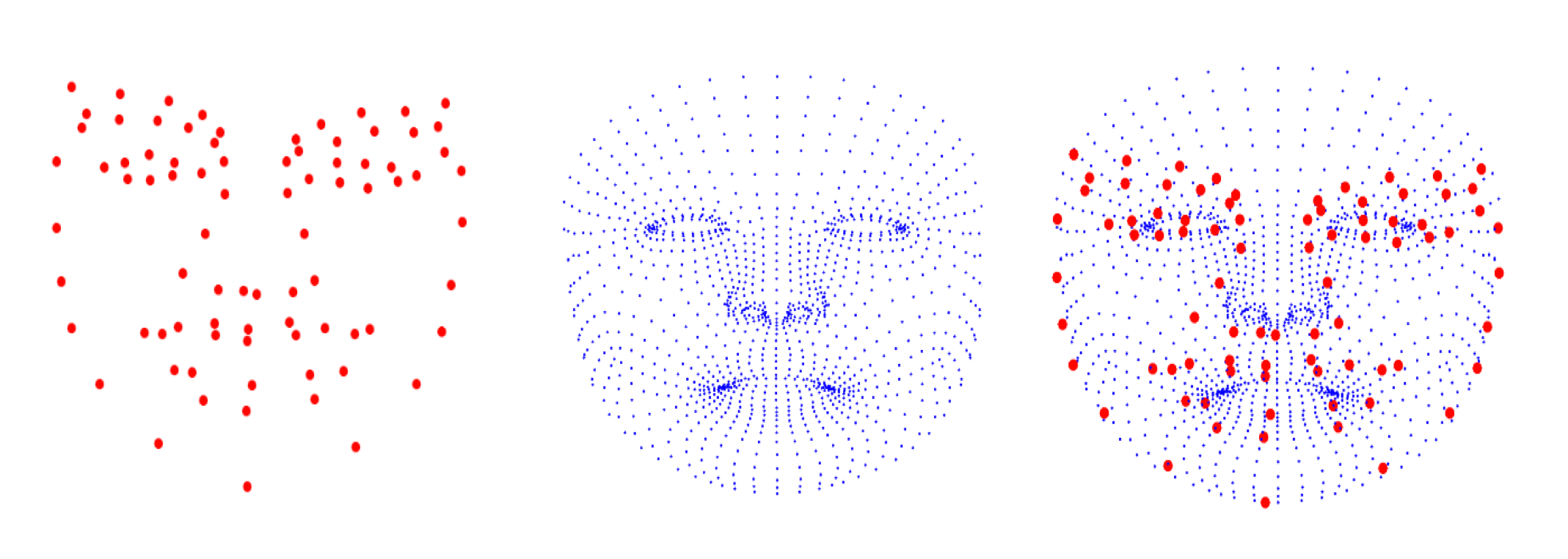

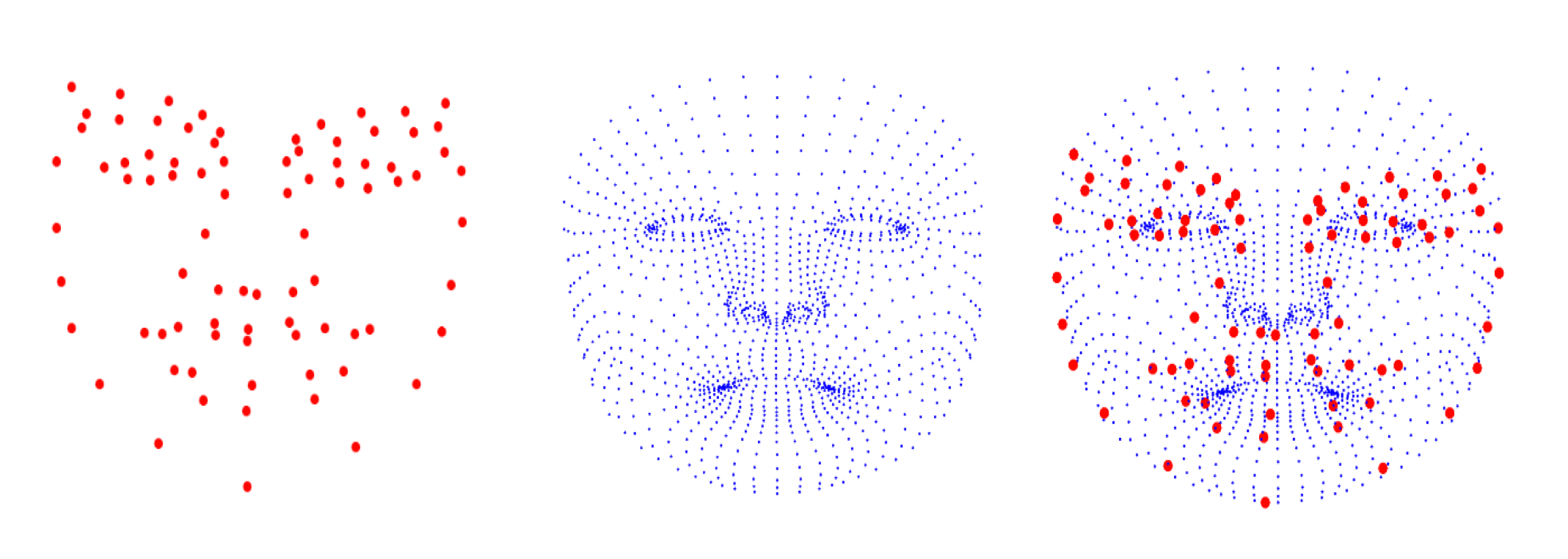

emotional feedback. The goal of this project is to create a

facial expression recognition (FER) system that can accurately and

efficiently process depth sensor data from a smartphone in order

to elicit users’ emotional state, specifically the six Ekman

emotions.

|

| Collaborators

|

|

|

| Publications

|

|

|

| Demonstrations |

|

|