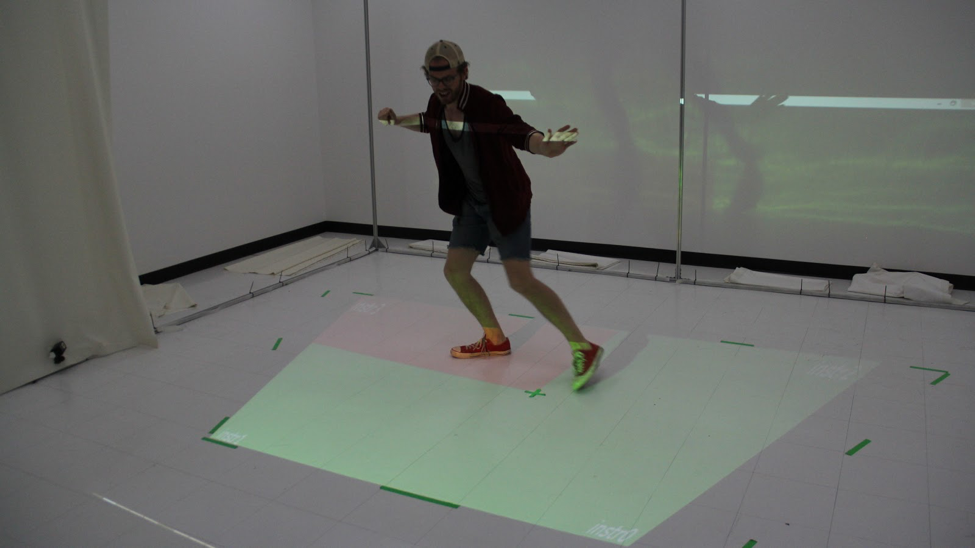

This

work explores an intuitive, real-time music generation system and

interface. We present a system that uses the real-time skeletal

tracking provided by a Microsoft Kinect to generate complex

musical compositions containing up to four tracks, with

instruments represented in distinct physical spaces. The

performer’s position and movements are translated into cues that

are relayed to Ableton Live for music generation. Finally, we

experiment with an interface overlayed in physical space to

provide real-time feedback to the user. This

work explores an intuitive, real-time music generation system and

interface. We present a system that uses the real-time skeletal

tracking provided by a Microsoft Kinect to generate complex

musical compositions containing up to four tracks, with

instruments represented in distinct physical spaces. The

performer’s position and movements are translated into cues that

are relayed to Ableton Live for music generation. Finally, we

experiment with an interface overlayed in physical space to

provide real-time feedback to the user.

This system is part of the 01x

Project.

|

| Collaborators

|

|

|

| Publications

|

|

|

| Presentations

|

|

|

| Links

|

|

|

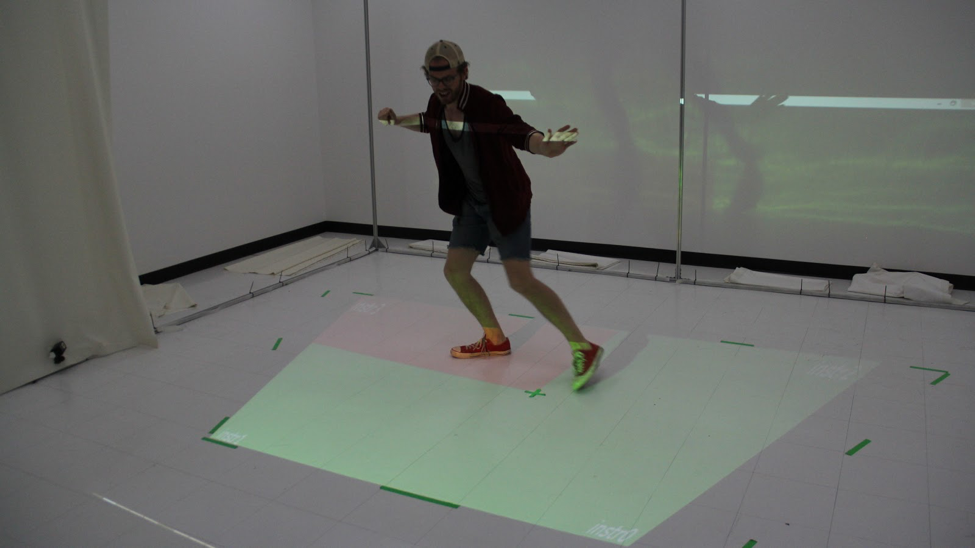

This

work explores an intuitive, real-time music generation system and

interface. We present a system that uses the real-time skeletal

tracking provided by a Microsoft Kinect to generate complex

musical compositions containing up to four tracks, with

instruments represented in distinct physical spaces. The

performer’s position and movements are translated into cues that

are relayed to Ableton Live for music generation. Finally, we

experiment with an interface overlayed in physical space to

provide real-time feedback to the user.

This

work explores an intuitive, real-time music generation system and

interface. We present a system that uses the real-time skeletal

tracking provided by a Microsoft Kinect to generate complex

musical compositions containing up to four tracks, with

instruments represented in distinct physical spaces. The

performer’s position and movements are translated into cues that

are relayed to Ableton Live for music generation. Finally, we

experiment with an interface overlayed in physical space to

provide real-time feedback to the user.